Confusion matrix6/23/2023

Let’s consider the example of predicting patients with migraines. To calculate the model’s accuracy, consider the following formula: The higher the accuracy, the better the model. Classification AccuracyĬlassification accuracy is one of the most critical parameters to determine because it defines how often the model predicts the correct output.

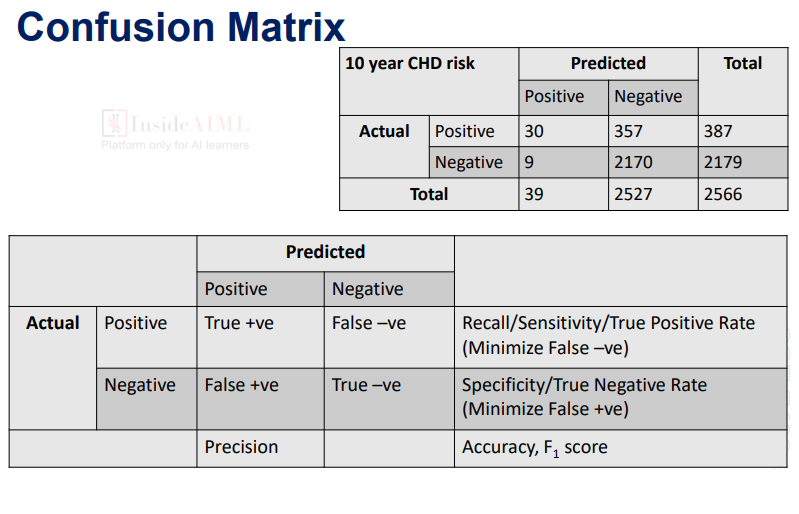

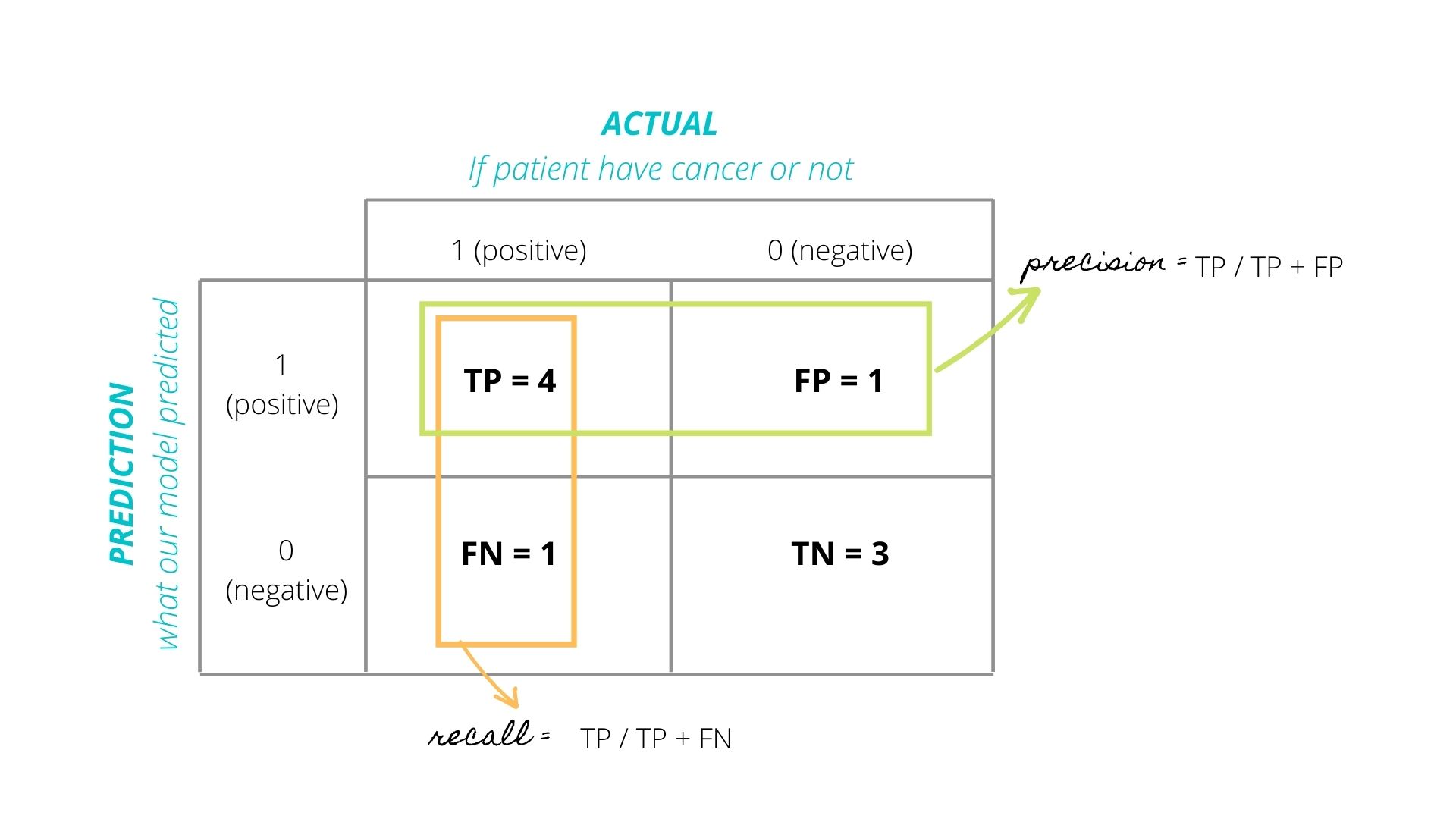

Once the confusion matrix has determined the number of True Positives (TP), True Negatives (TN), False Negatives (FN), and False Positives (FP), scientists can determine the model’s classification accuracy, error rate, precision, and recall. Once we have filled out the confusion matrix, we can perform various calculations for the model to understand its accuracy, error rate, and more.

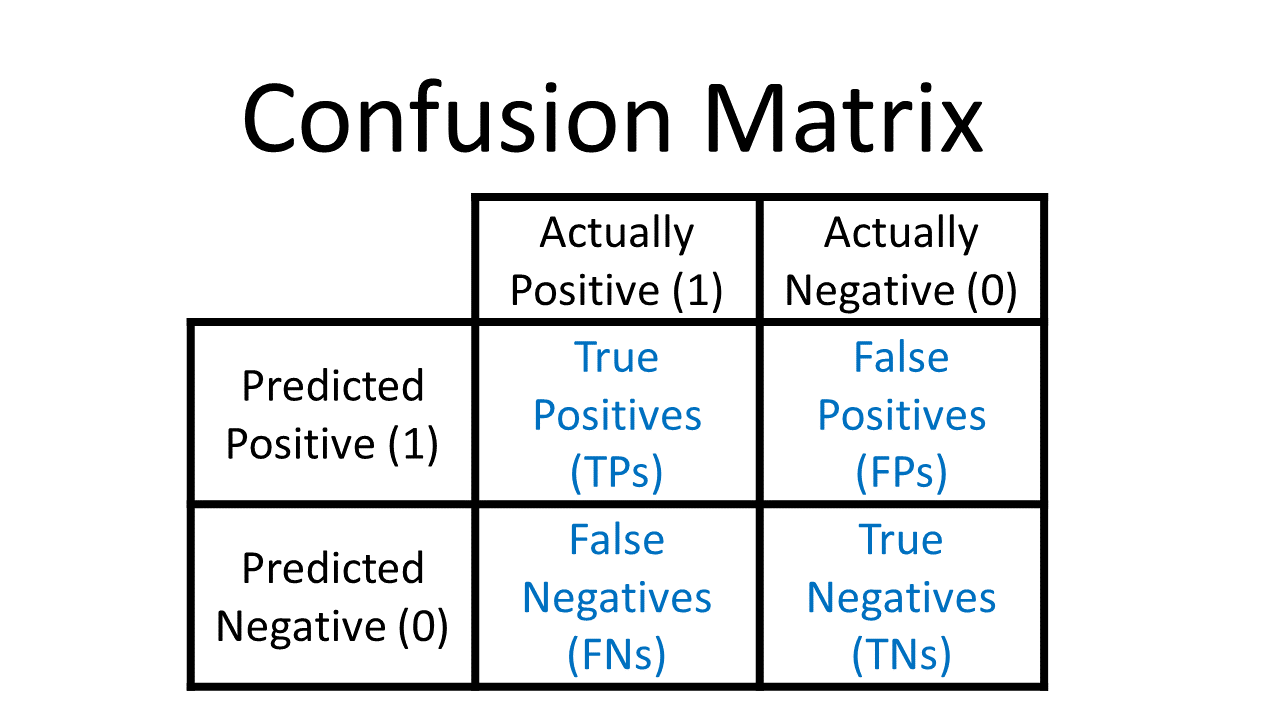

On the other hand, the false negatives and false positives indicate how many times the algorithm incorrectly made predictions. The algorithm also misclassified 20 patients by saying that they did have migraines when they didn’t, hence a false positive.Ĭonsequently, the true positives and the true negatives tell us how many times the algorithm correctly classified the samples.However, the algorithm misclassified 30 patients by stating they didn’t have migraines when they did, hence a false negative.There were 150 true negatives or patients who did not suffer from migraines correctly classified.There were 100 true positives or patients who suffered from migraines that were correctly classified.False positives are the patients that do not have migraines, but the algorithm said they did.False negatives are the patients that suffer from migraines, but the algorithm stated they did not.True negatives are the patients that did not suffer from migraines and that were correctly identified by the algorithm.True positives are the patients that suffer from migraines and that were correctly identified by the algorithm.Suppose we want to create a model that can predict the number of patients suffering from migraines. Let’s consider the following example to understand the confusion matrix and its values better. A false negative is also known as the Type 2 error.The actual value was positive, but the machine learning model predicted a negative value.A false positive is also known as the Type 1 error.The actual value was negative, but the machine learning model predicted a positive value.The machine learning model made a false prediction.The actual value was negative, and the machine learning model predicted a negative value.The actual value was positive, and the machine learning model predicted a positive value.The predicted value by the model matches the actual value.Now that we have deciphered the confusion table let’s understand each value. The rows correspond to the predicted values of the machine learning algorithm.The columns represent the actual values of the targeted variable–the known truth.The target variable has two values–positive or negative.In addition, it presents a table layout of the different outcomes of the prediction, such as the table below: The confusion matrix visualizes the accuracy of a classifier by comparing the actual values and the predicted values. For example, classification accuracy can be misleading, especially when two or more classes are in the dataset.Ĭonsequently, calculating the confusion matrix helps data scientists understand the effectiveness of the classification model. Data scientists use it to evaluate the performance of a classification model on a set of test data when the actual values are known. A confusion matrix is a performance measurement technique for a machine learning classification algorithm.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed